The good – it works

The e-book accessibility audit (August 2016 to November 2016) was a joint project between several UK Higher Education Institution disability and library services, Jisc and representatives from the book supply industry. More information on the partners is available.

The audit had three main purposes:

- To create a sort of “accessibility Esperanto” that gave library and disability staff with no knowledge of accessibility standards a way of communicating with suppliers.

- To create a yardstick to help compare the accessibility of different platforms in a (relatively) objective way.

- To evidence “accessibility attrition” along the supply chain so that publishers could see the extent to which accessibility investments might be undermined by third-party delivery mechanisms.

We were overwhelmed with the responses – testing was done by 33 universities and 5 academic suppliers. 44 platforms were tested, covering 65 publishers. In total, nearly 280 ebooks were evaluated.

Within a few weeks of the first drafts being available, one of the major suppliers – Askew and Holts – contacted customers with accessibility guidance for their platform. They were “pleased to use the audit as an opportunity to clarify our accessibility guidance ..”. The team behind the audit have been asked to contribute to conferences and journal articles. They have been shortlisted for an International Excellence Award for Accessible Publishing.

The bad – it’s only part of the accessibility picture

There is a tightrope between simple and simplistic. To ensure the project was “accessible” in the widest sense of the word we made simplifications to both the tests and the final data displayed. The scale of participation, and the fact that some 70% of people were auditing accessibility for the first time, suggests we succeeded in being ‘simple enough to be accessible’. But it was a crowd-sourced project. We had minimal influence on sampling and little opportunity for quality assuring testers. It is important to be aware of the caveats and the context.

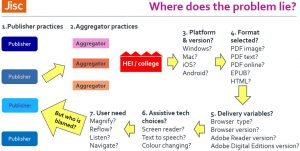

The resulting interactive Excel spreadsheet allows a nuanced interpretation of results. Maximum and minimum scores are plotted and rankings change depending on the features you require. However, it is dangerous to reduce the long journey between publisher and reader to a single number. The image below shows that the publisher is merely the beginning of the journey. The accessibility features the publisher adds to their files can be changed along the journey.

The aggregator may ignore the accessibility metadata the publisher provides or they may deliver content through a bespoke interface with poor accessibility. Or they may improve it. Inside the learning provider, the user experience may depend on the operating system they use and whether it’s up to date or not. Users may choose familiar formats (eg PDF) without realising an unfamiliar format like EPUB could have more accessibility. The institution’s IT infrastructure plays a part. Out of date software (browsers or Adobe Reader/Digital Editions) can impact on user experience, as can their assistive technology choices and versions.

All these variables combine to determine whether a user’s needs are met. Unfortunately, a bad experience may be blamed on a publisher even when the reading experience was largely outside their control.

The ugly – confrontation or collaboration?

We learn a language to communicate with others – not shout at them. If this audit has succeeded in creating an accessibility Esperanto, then we can use it productively to communicate with stakeholders. Collaboration is better than confrontation.

This is not to let anybody off the hook. If a product creates barriers for disabled students it will create extra costs in supporting students. There should be an accountability for these. However, the best starting point is a constructive conversation to identify where the problem lies and how it might be solved. Is the problem with..

- the publisher’s source file? Check the publisher’s website for information on the accessibility features of their digital resources.

- the aggregator’s delivery mechanism? Check the aggregator’s website for information on the accessibility features of their platform or any accessibility requirements they impose on contributing publishers.

- your own institution, running old software on operating systems that should have been updated years ago?

And when it comes to reading lists, remember that the publisher is not responsible for the platform you choose to access their books. Your experience may be better through the publisher’s own platform rather than a third party aggregator. Or maybe they partnered with the RNIB Bookshare service and an accessible version is instantly available? Or maybe they’ve just got an excellent customer services department that responds to requests within 48 hours with simple and sensible licences?

The audit is not a stick to beat the publishers (or even the aggregators). The audit team had significant help and ‘critical friend’ engagement from the industry. Accessibility is a partnership: use your accessibility Esperanto to communicate clearly and effectively but beware of using simple metrics in simplistic ways.

Conclusion

There is a tremendous wealth of data in the audit. Where do you start?

If you work with students encourage them to try formats like EPUB and HTML. They are likely to be easier to personalise for an accessible experience.

If you work in procurement a good starting point is to insist on seeing the accessibility credentials of systems. If they only speak standards (W3C, Section 508 etc) ask them to translate to the specific and demonstrable aspects used in the e-book audit. Standards are great but have to be demonstrable to be believable.

If you are a publisher or aggregator, explore your best and worst scores. How can you direct users to the most accessible formats or your accessibility features? How can you advocate for improved accessibility and interoperability for tools such as Adobe Digital Editions?

And if you benefit from the audit – whoever you are – let us know via ebookaudithelp@gmail.com.